I was approached by a client recently with an interesting query around SharePoint WAN performance. The client in question had a server farm located in the UK, but wanted to access their SharePoint sites from a branch office in Australia. They were surprised that download speeds were significantly slower from the Australian branch office in comparison to those located in the UK. Slow downloads over a WAN link certainly isn’t a problem that is specific to SharePoint – but it’s certainly relevant given that sharing office documents is core functionality. In my experience, users sometimes forget or don’t realise that when a document is “opened”, it is first downloaded to their client.

<start of story part, skip if you don’t like stories and want the post conclusion :-] >

Of course, my initial thought was something along the lines of “it’s a 30,000km round trip; of course its slower!”. However, before jumping to the conclusion that geographic distance was solely responsible for the slow download speed, I figured a logical course of action would be to replicate the clients scenario in the same conditions (aside from distance to the server). After all, the fact that they were typically accessing data in a different time zone may have meant that operations running on the server (e.g. backups) were having an impact. I arranged a conference call for 8AM BST time (17:00 AEST; the Aussies are 9 hours ahead), and we both attempted to download a file from the same SharePoint 2007 site.

For a 4MB file, they reported an average download (opening) time of approximately 2 minutes. The exact same operation from my desk the UK finished in about 12 seconds – around 10 times quicker! Given that we both reported a similar available bandwidth for downloads (around 3Mbit/s) I concluded that distance must be the cause of the issue, and mentioned that propagation delay has a significant impact on download speeds.

Their follow-up to this was “OK, 30,000km sounds like a lot, but doesn’t data travel at the speed of light across optical fibre? Why would a download delay of 3 * 10-8 cause a 4MB file to take several times as long to download given the same available bandwidth?”.

As I am sure you will have noticed, the information contained in the question above is not entirely accurate.

While it is true that in a best case scenario, a signal can propagate through free space at the speed of light (3 * 10-8), that is far from the reality of this situation. The signal is travelling through the minefield that is the Internet and while some of that journey will include propagation through an optical fibre (i.e. NOT free space; light travels at about 2/3 the speed of light here or 2 * 10-8), the “last mile” will likely include a copper highway that will need to be traversed. Additionally, propagation delay will also include the time taken to traverse any hops (routers, switches etc.) along the way. In this case, we measured the RTT (round trip time or latency) from the client and they reported a delay of around 350ms.

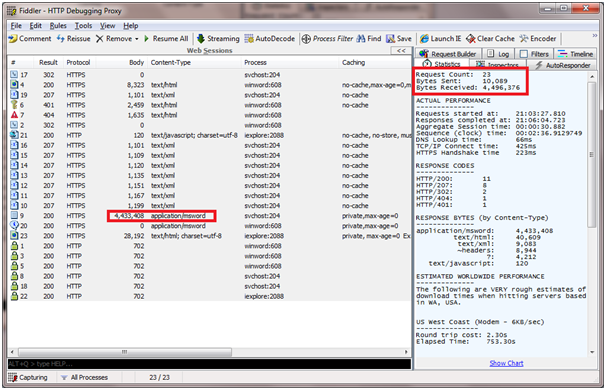

So the client got his facts slightly wrong about propagation delay over a WAN. However, that still doesn’t really answer the initial query. As far as the client was concerned, a file download operation only generates a few HTTP requests, none of which should add up to a delay of over a minute. I used Fiddler 2 to validate this by inspecting the number of requests, and the size of each request in bytes. You can see in the screenshot below that there were 23 requests in total, and a total received byte count of 4,496,376 (4.28 megabytes). Only 62,968 of those received bytes were in the body of HTTP requests other than the transfer of the Word document itself, which was around 4.23 megabytes.

Fiddler 2 screenshot showing a total received byte count of around 4.28 megabytes

So with an available bandwidth of 3Mbit/s for downloads, why would a 4.28MB file take 2 minutes to download from Australia as opposed to around 12 seconds in the UK? Why does geographic distance have such a huge impact?

The answer is found a little lower than the application layer for a start. While I think it’s somewhat easier to think in the context of HTTP requests between the client and server it doesn’t help us determine the expected data throughput beyond providing the number of bits in transit. There are physical and logical limitations at various layers in the TCP/IP model, but the easiest one to focus on in terms of abstraction is probably the transport layer – to be specific, the TCP.

Anyone who has studied networking will be familiar with the Transmission Control Protocol (TCP). It was designed to provide a dynamic, reliable end-end byte stream over an unreliable internetwork such as the Internet. TCP throughput dictates the speed at which traffic originating from the application layer can travel given that application (HTTP) messages are encapsulated by the underlying layers in the TCP/IP stack.

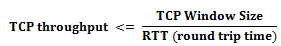

To illustrate the impact that latency has on TCP throughput, I have included a formula from this :

As shown, TCP Window size and round trip time has a huge impact on TCP throughput. The window size can be adjusted based on the implementation of the TCP, but the round trip time is largely dependent on distance. You may be wondering why one can’t simply increase the window size to mitigate the effects of latency and improve throughput – you’ll have to wait until next time (or <insert popular search engine name here> it) for an answer on that.

<end of story part, you probably want to hop back in here :-] >

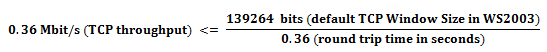

So back to our tale of woe (accessing a UK based data centre from Australia): do the numbers add up?

Theoretically, 0.36 Mbit/s for a 4MB file should mean that the file is downloaded to our Aussie friends in just under 89 seconds (1 minute and 29 seconds). However, the formula above doesn’t account for error rates and fluctuations in latency so the 2 minute time they reported certainly doesn’t seem unreasonable. Plugging the “UK” latency figure in (around 0.035s RTT) gives a theoretical (best case) figure of 8.4 seconds – which is just under my actual time of 12 seconds.

So is poor TCP performance over a WAN a SharePoint problem? No. It is a throughput problem that can only really be resolved by either a.) closing geographic distance (reducing latency) or b.) using an improved protocol implementation (increasing window size). Adding bandwidth in this scenario doesn’t help a great deal here.

Join me next time for a look at TCP throughput improvements in Windows Server 2008, WAN accelerators and SharePoint online (BPOS) – all of which should be considerations if you are looking at a geographically distributed SharePoint deployment.