| 11/07/2011 Update:I have had some feedback around this article that made me realise that I inadvertently gave RBS a bit of a bashing. It’s a useful technology that – used in the right scenarios – can significantly improve maintainability and scalability. The main point of the RBS section was to debunk the myth that RBS provides a means of bypassing the [current] data sizing boundaries that Microsoft provide. |

|---|

The recent post from the SharePoint Product Team blog on has caused quite a stir.

This is not least because in specific scenarios, Microsoft have confirmed that they will support customers with content databases that are between 200GB and 4TB in size, and in one scenario have removed the SharePoint supported limitation altogether (thus meaning that customers will be limited to the ).

I encourage you to read through the barrage of decent information that has already been published online, including:

- from Microsoft – this is the post that everyone is talking about.

- from Paul Andrew.

- From Joel Oleson.

- From Wictor Wilén

Whilst the SharePoint Product Team blog includes details around using the SQL RBS FILESTREAM provider to enable iSCSI connected storage (and therefore lower cost NAS storage), the focus of this post is on the sizing recommendations.

Before I begin note that none of the information below is official – it is simply my own interpretation of the recent guidance.

A step back

Let’s start by rewinding a couple of weeks. Way back then, there were some heated debates on Twitter and various forums surrounding the 200GB supported content database limitation. In particular, the main theme was whether or not customers could use RBS to get around this limit.

This is largely because prior to the SP1 guidance, we only had a 200GB supportability limit to work toward for collaboration scenarios which lead customers to question the scalability of SharePoint. It seemed logical to conclude that – given the 200GB limitation – storing BLOBs outside of the SQL DB would mean that only metadata stored within the DB itself would count towards that limit. This misconception was encouraged by a lack of guidance from Microsoft (the made no mention of RBS) and no concrete explanation around the sizing limits.

The options for customers were limited to:

- Assuming that RBS provided a means of bypassing the 200GB limitation and scaling up or,

- Assuming that the 200GB limitation included metadata and BLOBs, regardless of BLOB location and scaling out by creating new Content Databases.

I think it’s worth mentioning at this stage that the advice back then was – and still is – to ensure that site collections remain below 100GB in order to allow for backup/restore operations. The one exception to this is where a site collection is the only one stored within a content DB, in which case the size of the site collection is limited to the size of the content DB and backup/restore operations must be taken at the SQL DB level.

OK, what about this 4TB business?

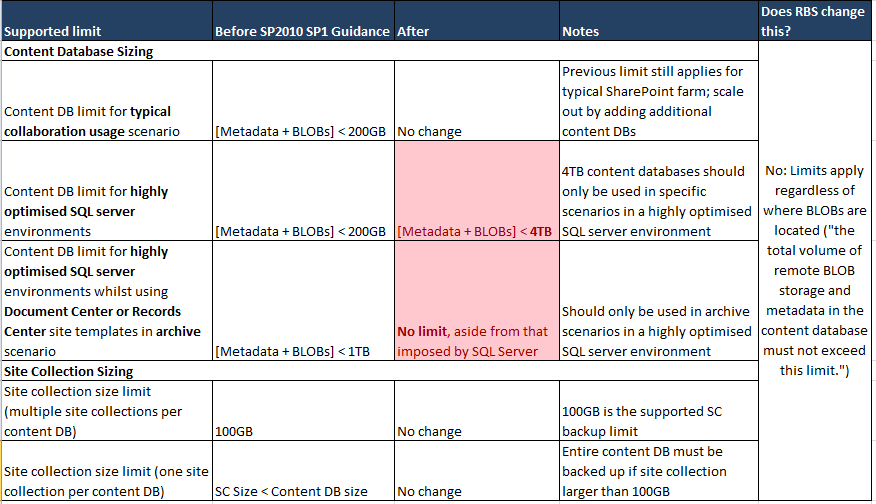

Fast forward to today and we have some new guidance from the SharePoint Product Group based on lessons learned over the last 12 months. Rather than regurgitate this information I refer you to the links at the top of this post, and have attempted to provide a summary of the sizing changes:

* “Highly Optimised” refers to the guidance contained within the document. In particular, the Content Database Limits section lists specific requirements that need to be met.

There are a few points to note in the above table:

- The original 200GB recommendation remains for the majority of scenarios.

- The new supported scenarios only apply to optimised environments.

- There are no changes in terms of site collections limits.

- RBS doesn’t change any of the sizing recommendations.

Hang on; RBS makes no difference to content DB sizing?

Here is the from Microsoft:

“The content database size includes both metadata and BLOBs regardless of where the BLOBs are located and use of RBS does not bypass or increase these limits.”

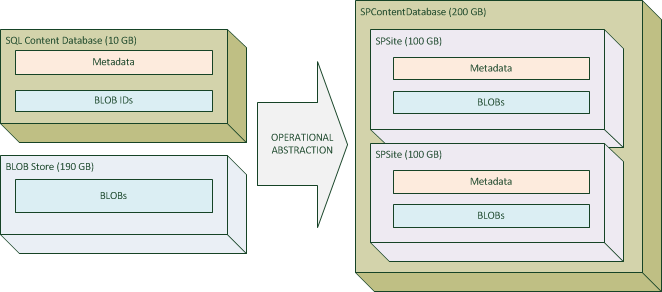

I think the formula below helps simplify this statement:

Content database size == [Metadata + BLOBs], regardless of where the BLOBs are stored.

This is not new and was the case prior to the SP1 guidance.

Although this is a gross oversimplification (I deliberately miss off various GUIDs including those that identify the SPSite objects), let’s look at a straightforward example to illustrate this:

In this example, we have reached the supported “Content database size” limitation for a typical collaboration usage scenario. Without a highly optimised SQL Server environment and advanced disaster recovery story we will need to scale out by adding additional content databases and potentially moving site collections using Move-SPSite.

Limits apply at the abstracted level, i.e. SPContentDatabase and SPSite, so although in the above scenario the actual SQL Content DB is only 10GB in size (the metadata in this case makes up for 5% of SPContentDatabase), the “Content Database Size” as far as SharePoint is concerned is 200GB so we have reached the supported limit for environments that do not meet the SQL Server optimisation requirements.

| 10/07/2011 Update: kindly reviewed this post and suggested I add an explanation as to why utilising RBS doesn’t affect the supported limits. Fortunately, covers this off nicely:”Activating RBS so that your 100 2GB files can be pushed out of the database file is not going to change the fact that SharePoint is only processing 100 rows of data. Put simply, the effort required to process the relational, structured data that is used to browse and present the SharePoint UI (and related content) will NOT be significantly impacted/benefited by RBS. If you have large lists in SharePoint, you’re still going to have large lists after RBS, and that is where SQL is spending most of its time… sorting through the rows of data necessary to present SharePoint content. It isn’t until you click directly on the file link that RBS is really even doing anything..”

Chris makes several good points here, one being that 100 items still means that 100 rows needs to be processed, RBS or not. |

|---|

So… what’s the point of RBS?

We now know that RBS is not there to help us get around the content DB sizing limitations – those of you who have deployed RBS knew that already though, right? :-).

For those of us that haven’t, and are considering RBS for our environments, let’s walk through a few valid scenarios based on the Product Teams blog:

- Taking advantage of commodity storage – this is the key scenario and the new support for iSCSI connected storage improves this story.

- Document Archive scenarios – BLOBs are immutable so frequent writes can reduce performance.

- Getting around the 10GB content DB limitation imposed by SQL Server 2008 R2 Express: FILESTREAM data doesn’t count toward the limit.

- Improving performance where BLOBs are, on average larger than 1MB (if average BLOB size is below 256KB they may as well be stored in-line; 256KB-1MB is a grey area and it depends on your environment).

- [11/07/2011] Enhancing maintainability:

- Improving performance during index rebuild maintenance tasks.

- Reducing the effective size of the SharePoint content database and backup size.

- Reduced transaction-log-write overheads, the benefit of which will depend on the frequency with which you truncate the transaction log during a backup.

Another benefit offered by RBS is the capability to perform shallow copies whilst using the Move-SPSite cmdlet. This can be a big time saver if you frequently migrate site collections between content databases, and was added in In essence, structured data is moved between content databases whilst unstructured data (remote BLOBs) remain untouched.

| 10/07/2011 Update:

Wictor also highlighted the new content database item limit: items including documents and list items. We can use this number alongside the other content DB sizing limits to review the supported extremes in the context of RBS:

The second scenario really underline the point that RBS is most useful for storing a small number of large (> 1MB) BLOBs, as opposed to a large number of small (< 256 KB) BLOBs. We should note that in the third, read only archive scenario that BLOBs are immutable which means that frequent updates will kill limit performance in a large scale (> 4TB) document repository, RBS or not.

For further reading on valid RBS scenarios I suggest checking out (Chris is a PFE and MCM candidate). |

|---|

Wrap up

- The new supported limits are great news for customers with highly optimised SQL server environments and an advanced disaster recovery strategy.

- For the general usage scenarios, 200GB is still king and environments can scale out by adding additional databases.

- The content database sizing recommendations apply at the SharePoint Content Database (SPContentDatabase) level, not SQL DB level.

- There are several scenarios in which RBS can be useful but bypassing the supported DB sizing limits is not one of them.

Further reading:

[11/07/2011]

| 11/07/2011 Update: Rob Doria, a man and proponent of RBS has on the new guidance from Microsoft. If you are considering the technology the article makes for a good read and although it’s important to stay within the current Microsoft supportability boundaries I also think that its useful to consider all points of view. |

|---|