| Although most of this article is still correct, things are slightly different in SQL Server 2012. See |

|---|

It’s been a while since I’ve written an article that highlights a common misconception. This isn’t least because the last time I did this I received comments from one or two apparently disgruntled readers. No one likes to be told that they are wrong and in fairness, that particular article made a couple of sweeping statements. This was somewhat deliberate to spark a discussion and the article triggered a fair number of comments and follow up emails.

However, I do feel that I would be doing the SharePoint community a disservice by not sharing some of my recent findings as an “accidental” DBA for one or two SharePoint implementations I have recently worked on. I have named the series the “accidental SharePoint DBA series” because and is widely understood. OK, adding the word “SharePoint” is in no way original but I think it clearly defines the target audience: I am focussing on the things that I think SharePoint infrastructure people will find useful.

So let’s start this article with a fact that appears to be little-known amongst SharePoint infrastructure folk such as myself:

“In SQL Server 2005, 2008 and 2008 R2, the max memory setting does not define the total amount of memory that SQL Server can use. It defines the maximum buffer pool size.” |

|---|

If you are thinking “well, obviously…” then this article probably isn’t for you. If, like me your initial thought is “that makes no sense… he must be wrong” then you’ve come to the right place.

If we are talking SQL Server certifications or job titles, the answers is a firm “no”. However, I do have around 4 years’ worth of hands-on experience with SQL Server implementations in the wild. This experiences ranges from 4GB, quad core 32-bit machines to 144 GB, dual hex core 64-bit monsters. In all cases I have been looking after SQL server in the context of either SharePoint 2003, 2007 or 2010 (I don’t count 2013 as it has only just been released). In all cases my “experience” has been part time/occasional, mainly because SQL has been one of several applications within my area of responsibility.

Leaving SQL Servers “max memory” setting at the default value of 2147483647 MB (2 Petabytes) means that SQL Server may well use all of your servers memory just for its buffer pool.

This can result in:

- SQL Server non buffer-pool memory usage being paged out to disk

- Unnecessary memory pressure from the OS and other external processes such as AV and backup which can lead to…

- SQL Servers working set being trimmed/paged out which can result in…

- SharePoint being slow.

as follows:

| The buffer pool (sometimes called the buffer cache) is part of the Storage Engine layer in SQL Server and is responsible for managing in-memory copies of portions of the data files in the various databases in the SQL Server instance.- Paul Randal |

|---|

My (oversimplified) way of looking at the buffer pool is that it’s a cache that saves SQL from having to grab data from disk every time a query is run.

Here is an excerpt from :

Except… you want hard proof don’t you? I did too, so I checked the Total Memory counter in PerfMon and compared it to the working set counter for the SQL Server executable:

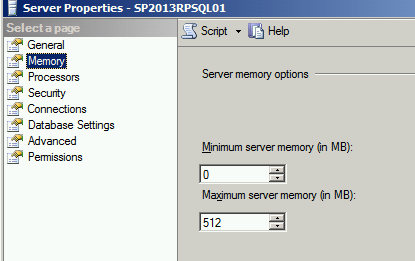

SQL Server Max Memory setting in SSMS (this is a dev VM):

|

|---|

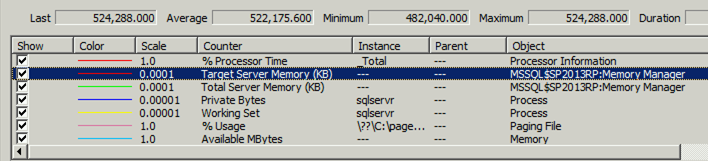

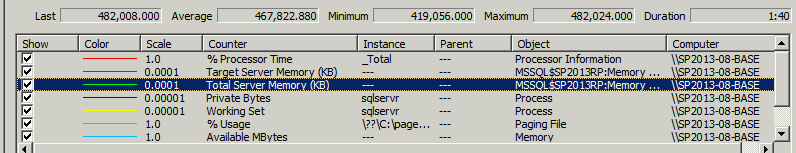

Memory ManagerTotal Server Memory counter. On a busy server you might find that this matches the “max memory” setting:

Memory ManagerTotal Server Memory counter. On a busy server you might find that this matches the “max memory” setting:

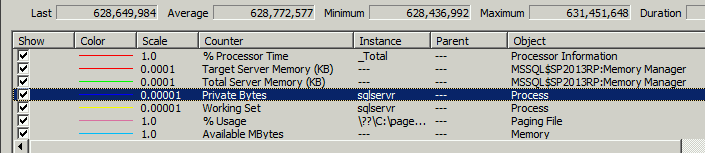

Private bytes counter for SQLServer executable (Private Bytes” equals the ““):

Hopefully that’s enough information to satisfy the cynic in you – the SQLServer process is clearly using more memory than that defined in the “max memory” setting. So that leaves us with the question of what is using approximately 140 MB (27% of the buffer cache in this particular case) outside of the buffer pool.

I refer you to by Bob Ward which lists out memory usage outside of the buffer pool.

To illustrate one example, a 64-bit SQL server configured with 64 GB memory and 16 CPU cores would need 1408MB, or 1.4 GB memory to create the To reiterate the point, this memory is required outside of that configured using the “max memory” setting.

There is no hard and fast rule on this but I normally lean towards Jonathan Kehayias’ guidance contained within his

|

“As a general base configuration, for a dedicated SQL Server machine, reserve 1 GB of RAM for the OS, 1 GB for each 4 GB of RAM installed from 4–16 GB, and then 1 GB for every 8 GB RAM installed above 16 GB RAM. This means that, for a server with 64 GB RAM, the starting point for max server memory should be in the 54 GB range, and then tuned higher based on monitoring the MemoryAvailable Mbytes performance counter.”

– Jonathan Kehayias’

|

|---|

I did try to get hold of Jonathan to confirm he was happy for me to repost the above. If you find out that he isn’t please let me knowJ.

I’ve heard that “lock pages in memory” fixes this…

It may help. But doing this alone (without changing the max memory setting) can lead to stability issues. The OS might need that RAM for a reason…

Anyway, the lock pages in memory setting has been and is too big a topic to discuss here.

Summary

The max memory setting in SQL Server is an important configuration option that should be set to limit SQL Servers buffer pool usage.

As shown, it does not define the total amount of memory (RAM) that SQL server can use and we need to ensure that there is sufficient remaining memory for the OS, backup tools, antivirus programs and SQL non-buffer pool usage. Jonathan Kehayias provides guidance on setting the max memory setting in the first instance then tuning based on key memory counters within PerfMon.